Monitoring Tools

The ThingWorx Platform is developed on Java technology. The diagnostic and monitoring tools explained in this section are designed to collect performance metrics and other data from the Java virtual machine (JVM). Use these tools to monitor your ThingWorx solutions through the JVM layer. The tools get the following data from JVM:

• Memory operations—Check if the server has enough memory resources allocated, and how long the JVM Garbage Collection (GC) operations take.

• Thread performance—Check if there are any long transactions or threading issues that could cause performance issues in your ThingWorx solution.

Direct JVM Monitoring

Direct JVM monitoring tools are provided by Oracle or the Support Subsystem. Collecting data directly from the JVM is a good alternative to identify and fix performance issues. It is recommended that you monitor the following JVM diagnostics for your ThingWorx solutions:

• Monitor the memory performance over time using Garbage Collection logging, an in-built capability of JVM.

• Thread-level collection and analysis using the Support subsystem.

Monitor the Memory Usage of a ThingWorx Server

The Java Virtual Machine (JVM) manages its own memory (heap) natively using the Garbage Collector (GC). GC identifies and removes objects in the Java heap that are not in use. You can monitor the memory usage of a ThingWorx server by logging the details collected by the GC.

You can use the GC log file to identify trends in the memory consumption. Depending on the trends, you can check if the maximum heap parameter of the Apache Tomcat server should be changed for better performance. Therefore, it is recommended to set up logging for GC on the Apache Tomcat server.

JVM has flags that are called at runtime. These flags are used to write the statistics about GC events, such as the type of GC event, amount of memory consumed at the start of the event, amount of memory released by the GC event, and duration of the GC event.

The GC log file is overwritten every time the JVM is started. It is recommended that you back up the log file during the server restart. This helps you analyze if the memory usage caused the server to restart.

You can use analysis tools such as, GCEasy.io and Chewiebug GC Viewer, to analyze the logs from Garbage Collection.

How to Set Up Garbage Collection Logging on Linux (setenv.sh)

Perform the following steps to set up Garbage Collector logging on Linux:

1. Open the $CATALINA_HOME/bin/setenv.sh script in a text editor.

The setenv.sh script file may not be available in all installations. If the file does not exist, create a new setenv.sh file at the location.

2. Append the following text at the end of the JAVA_OPTS variable in double quotes.

For Java 8:

-XX:+UseG1GC -Xloggc:/logs/gc.log -XX:+PrintGCTimeStamps -XX:+PrintGCDetails

If JAVA_OPTS is not specified in the setenv.sh file or the setenv.sh file is new, add the following line in the file:

JAVA_OPTS=”-XX:+UseG1GC -Xloggc:/logs/gc.log -XX:+PrintGCTimeStamps -XX:+PrintGCDetails”

For Java 9 and later:

-Xlog:gc:file=/logs/gc.log:time,level,tags

If JAVA_OPTS is not specified in the setenv.sh file or the setenv.sh file is new, add the following line in the file:

JAVA_OPTS="-Xlog:gc:file=/logs/gc.log:time,level,tags"

Note the following:

▪ The -XX:+PrintGC option returns a less verbose output log. For example, the output log has the following details:

2017-10-10T13:22:49.363-0400: 3.096: [GC (Allocation Failure) 116859K->56193K(515776K), 0.0728488 secs]

▪ The -XX:+PrintGCDetails option returns detailed output log. For example, the output log has the following details:

2017-10-10T13:18:36.663-0400: 35.578: [GC (Allocation Failure) 2017-10-10T13:18:36.663-0400: 35.578: [ParNew: 76148K->6560K(76672K), 0.0105080 secs] 262740K->193791K(515776K), 0.0105759 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

3. Add the following lines at the end of the setenv.sh script. This code automatically backs up an existing gc.out file to gc.out.restart before starting the Apache Tomcat server:

# Backup the gc.out file to gc.out.restart when a server is started.

if [ -e "$CATALINA_HOME/logs/gc.out" ]; then

cp -f "$CATALINA_HOME/logs/gc.out" "$CATALINA_HOME/logs/gc.out.restart"

fi

if [ -e "$CATALINA_HOME/logs/gc.out" ]; then

cp -f "$CATALINA_HOME/logs/gc.out" "$CATALINA_HOME/logs/gc.out.restart"

fi

4. Save the changes to the file.

5. Restart the Apache Tomcat service using the service controller that is applicable to your operating system.

How to Set Up Garbage Collection Logging on Windows (Apache Service Manager)

Perform the following steps to set up Garbage Collector logging on Windows:

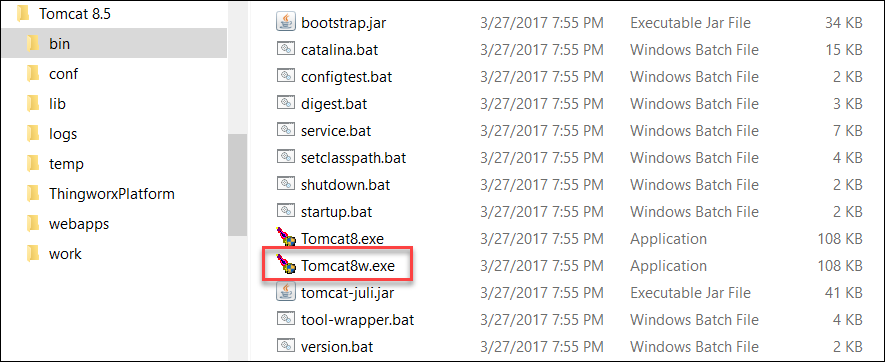

1. In Windows Explorer, browse to <Tomcat_home>\bin.

2. Start the executable that has w in its name. For example, Tomcat<version number>w.exe.

3. Click the Java tab.

4. In the Java Options field, add the following lines:

For Java 8:

-Xloggc:logs/gc_%t.out

-XX:+PrintGCTimeStamps

-XX:+PrintGCDateStamps

-XX:+PrintGCDetails

-XX:+PrintGCTimeStamps

-XX:+PrintGCDateStamps

-XX:+PrintGCDetails

For Java 9 and later:

-Xlog:gc:file=/logs/gc.log:time,level,tags

For information on the between -XX:+PrintGC and -XX:+PrintGCDetails options, see the section How to Set Up Garbage Collection Logging on Linux (setenv.sh).

5. Click Apply to save the changes to the service configuration.

6. In the General tab, click Stop.

7. Click Start to restart the Apache Tomcat service.

The new garbage collection log is written to %CATALINA_HOME%\logs\gc_<Tomcat_Restart_Timestamp>.out file.

Collect Diagnostic Data Using the Support Subsystem

For information, see Support Subsystem.

VisualVM and Other JMX Monitoring Tools

VisualVM is a monitoring tool that collects data for Java solutions. You can use VisualVM to monitor ThingWorx solutions. It collects information about memory and CPU usage. It generates and analyzes heap dumps and tracks memory leaks. You can collect data for solutions that are run locally or on remote machines.

VisualVM provides an interface that enables you to graphically view the information about Java solutions.

VisualVM is available along with Java JDK. See the section in ThingWorx Help CenterApache Tomcat Java Option Settings for more information on connection settings for VisualVM.

Other tools that integrate with the JVM using Java Management Extensions (JMX) also provide similar monitoring capabilities.

VisualVM captures the following key metrics that impact performance of the solution:

• JVM memory performance

• Time required to execute threads

• Memory of operating system and CPU metrics

• Database connection pool monitoring

VisualVM tracks real-time data for approximately 20 minutes.

ThingWorx Application Logs Monitoring

ThingWorx solution logs should be monitored regularly for errors or other abnormal operations. Errors can occur either at the platform layer, or in the custom scripts used with ThingWorx. It is recommended to review messages in the error log daily.

Configure the LoggingSubsystem Option for Writing Stack Trace for Errors

The Enable Stack Tracing option in LoggingSubsystem writes error messages and associated stack traces to log files. By default, LoggingSubsytem is not configured to write stack traces (call stacks) to disk. Set the Enable Stack Tracing option to write call stacks of exceptions and error messages to the ErrorLog.log file that is available in the ThingworxStorage/logs folder. It is recommended to set this option to have the details of function calls in the stack trace while debugging an error.

Retain the Application Logs

The log level determines how much granularity should be displayed in the logs. It is recommended to keep the log level of the solution to minimum verbosity such as Info or Warn, unless you are actively troubleshooting an issue. Be careful when you increase the log verbosity to Debug, Trace, or All. This can have an adverse effect on the performance of ThingWorx. It can cause unpredictable behavior of the solution if the resources available on the ThingWorx Platform are insufficient.

Logs are saved as separate files on the server in the /ThingworxStorage/logs folder. The logs are archived in the archives folder located in the logs folder. The log rotation rules are based on the file size and time configurations that are set in the Log Retention Settings of the logging subsystem. These settings can be changed and applied at runtime.

You can specify the following rollover configurations in > > > :

• File size—The log file is archived when its size reaches or exceeds the size defined in the Maximum File Size In KB field. The default file size for a log is set to 100000 KB. The maximum file size that you can set is 1000000 KB.

• Time—The files are rolled over daily at midnight, when a log event is triggered. The logs are moved to the archives folder. By default, if a log is in the archives folder for more than seven days, it is deleted. The default can be changed in the Maximum Number Days For Archive field. You can specify 1 to 90 days.

It is important to monitor the log sizes. Purge the log files consistently by backing up the files first or discarding them when they contain obsolete information.