Example: Linear Prediction Methods

Use the burg and yulew functions to generate coefficients for the named linear prediction model. These functions implement the Burg method and the Yule-Walker algorithm, respectively. For a description of these algorithms and the mathematics behind them, see Sophocles J. Orfanidis, Optimum Signal Processing, Macmillan (1989).

Yule-Walker Prediction

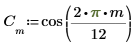

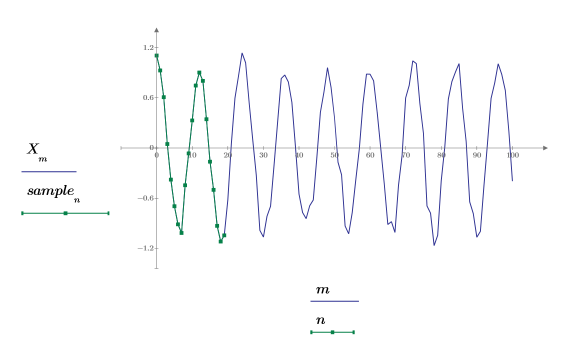

1. Use the cos function to define a cosine signal.

2. Plot the signal.

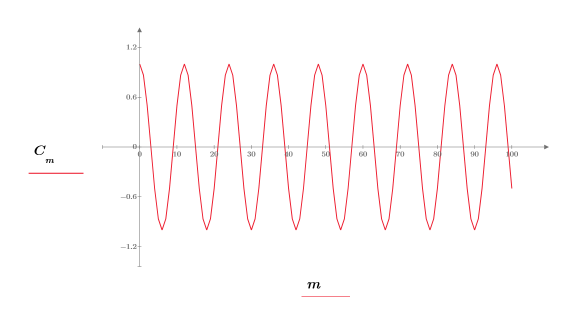

3. Use the rnd function to add random noise to the signal.

4. Plot the original and the noisy signals.

The 0.2 is subtracted from the signal to center the random component around amplitude 0.

5. Take a short sample set from the beginning of the signal.

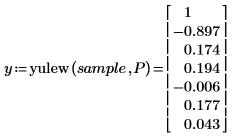

6. Set the prediction order, and use the yulew function to compute the coefficients.

◦ These coefficients are for an all-pole filter which approximates the original signal. As expected, there is one large pole, representing the cosine, and several smaller ones, approximating the noise.

◦ Order P determines the number of consecutive values used to predict the next value in the sequence. The first through sixth element of the coefficient vector are used, ignoring the zeroth element, which is always 1. This 1 is needed when y is used as a prediction error filter to generate the complete set of prediction errors.

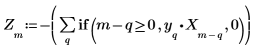

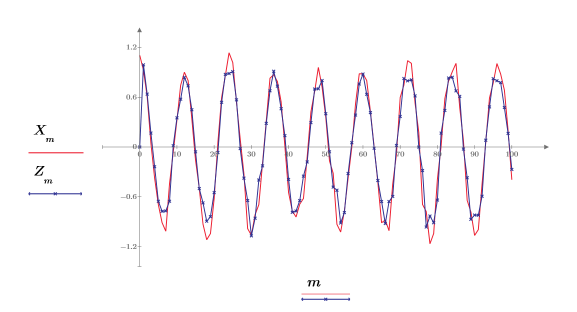

7. Compare the predicted points, calculated using the previous P points in the signal, to the original data. This shows how good the filter would be if used in place of signal X.

8. Plot the original and the predicted signal.

The approximation is very good, even if using only the first 20 points of data.

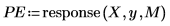

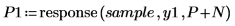

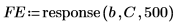

9. Use the response function to generate the prediction errors for the Yule-Walker coefficients using the coefficient array as a filter, then computing the response with the sample as input.

The full coefficient array is sometimes called a prediction-error filter.

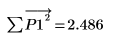

10. Verify that these errors do indeed give the difference between the two previous plots.

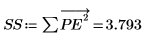

The Yule-Walker algorithm minimizes the sum of the squares of the prediction errors.

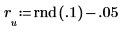

11. Spot check the minimization by perturbing y (adding a small random amount to all coefficients except the first) and recomputing the sum (by placing the cursor on the rnd function below and pressing [F5] a few times).

Burg Prediction

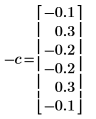

1. Construct a sequence for which linear prediction ought to work well: an autoregressive process with known coefficients.

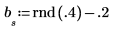

2. Initialize the time series.

3. Generate the rest of the series by using the autoregression plus noise.

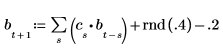

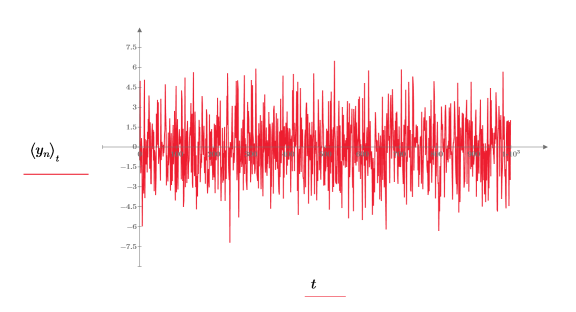

4. Take the whole time series as the sample and compute coefficients for a model of order 6, and then use the burg function to compute the coefficients.

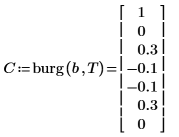

5. Compare the coefficients that actually generated the process, using the sign convention of this document.

◦ The zeroth coefficient was left out, since it is always equal to one.

◦ The computed elements 1 and 5 of C are different.

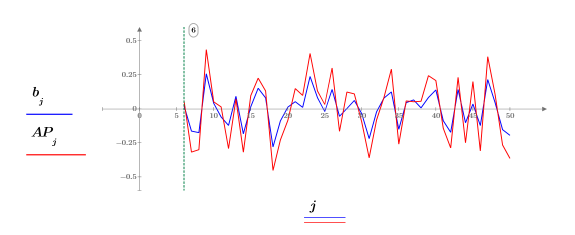

6. Use the Burg coefficient vector C to generate the prediction errors.

◦ The Burg method assigns 0 weight to the first P error, so the minimization is not affected by the zero-padding of the sample, as is the minimization carried out by Yule-Walker.

◦ The Burg criterion also involves both the forward errors FE and the backward errors BE.

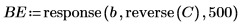

7. Use the response and reverse functions to compute the backward errors.

8. Use the prediction errors FE and the autoregression function b to compute the actual predictions AP, and then plot a piece of the predicted and actual series starting at the Pth term.

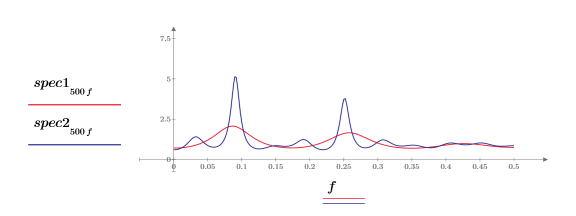

Spectral Estimation

You can use coefficients generated by the linear prediction methods to estimate the power spectrum of the process being modeled. In this context the Burg algorithm is known as maximum entropy spectrum analysis (MESA), and in some cases it can more accurately determine the spectra of short time series than can the FFT.

Estimate the power spectrum of a process consisting of a sum of sinusoids with Gaussian noise added.

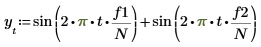

1. Use the sin function to define a signal that has two sine components.

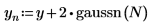

2. Use the gaussn function to add some Gaussian noise.

The gaussn function returns an n-element vector of random numbers following a Gaussian probability distribution of mean 0 and standard deviation of 1.

3. Define two orders for the linear prediction.

4. Use the burg function to estimate the spectrum with autoregressive models of the two orders, using the whole time series as the sample.

5. Use the gain function to calculate the corresponding power spectra, as the squared magnitude of the transfer function defined by the coefficient arrays F1 and F2.

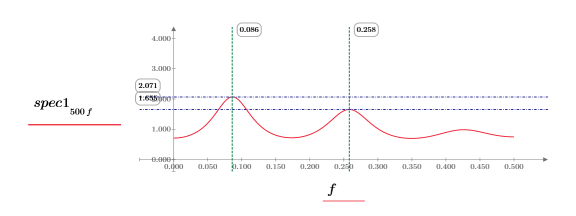

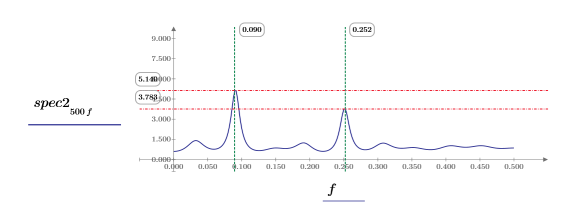

6. Plot the two power spectras.

◦ Each power spectra has two peaks.

◦ The lower, as well as the higher, frequency peaks for both spectras occur at about the same frequency.

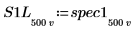

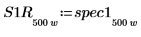

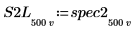

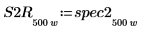

7. Subdivide the spec1 and spec2 curves into two segments each to facilitate finding their gain peaks and the frequencies at which they occur.

|  |

|  |

|  |

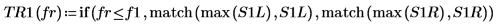

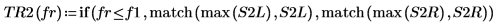

8. Use the if function to define a function that returns the frequency at which the given segment has a peak, and then use it to find the left-side and right-side peaks of each spectra trace.

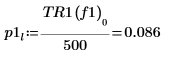

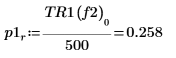

| |

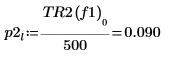

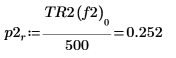

| |

|  |

|  |

9. Use the max function to calculate the magnitude of each peak.

|  |

|  |

10. Plot each function separately. Use horizontal and vertical markers to show the magnitude of each peak and the frequency at which it occurs.

◦ Observe the two plots while recalculating the worksheet.

◦ The magnitude of the peaks vary with each recalculation and they usually have different values. It is, however, possible for the magnitudes to be equal.

◦ The peaks occur at about the same frequencies.

For more on this method of spectral estimation, see Digital Spectral Analysis with Applications by S. Lawrence Marple, Jr. (Prentice-Hall, Inc).